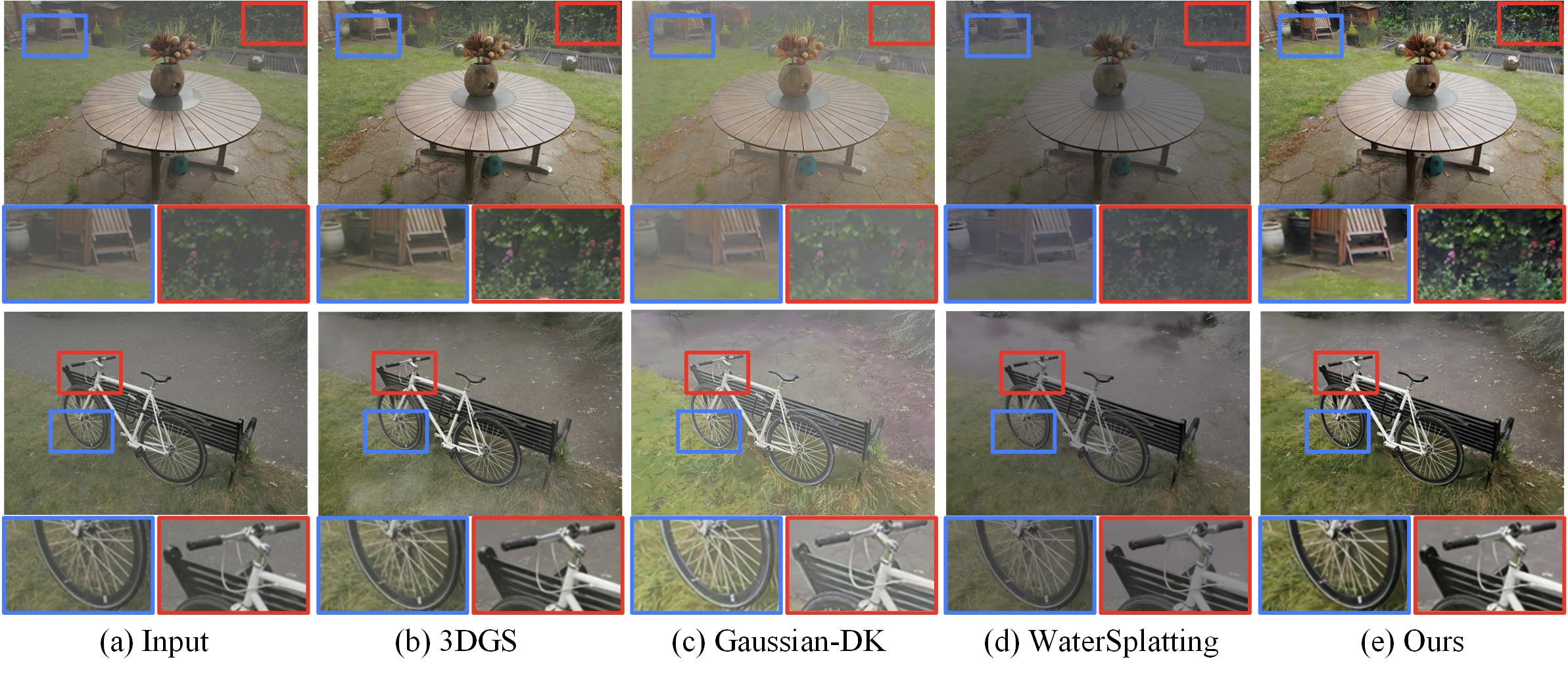

3D Gaussian Splatting (3DGS) has recently achieved remarkable progress in novel view synthesis. However, existing methods rely heavily on high-quality data for rendering and struggle to handle degraded scenes with multi-view inconsistency, leading to inferior rendering quality. To address this challenge, we propose a novel Depth-aware Gaussian Splatting for efficient 3D scene Restoration, called RestorGS, which flexibly restores multiple degraded scenes using a unified framework. Specifically, RestorGS consists of two core designs: Appearance Decoupling and Depth-Guided Modeling. The former exploits appearance learning over spherical harmonics to decouple clear and degraded Gaussian, thus separating the clear views from the degraded ones. Collaboratively, the latter leverages the depth information to guide the degradation modeling, thereby facilitating the decoupling process. Benefiting from the above optimization strategy, our method achieves high-quality restoration while enabling real-time rendering speed. Extensive experiments show that our RestorGS outperforms existing methods significantly in underwater, nighttime, and hazy scenes.